When you’ve been following the native AI scene, you in all probability know Qwopus—the open-source mannequin that attempted to distill Claude Opus 4.6’s reasoning into Alibaba’s Qwen, so you might run one thing resembling Opus by yourself {hardware} totally free. It labored surprisingly properly. The plain catch: Qwen is a Chinese language mannequin, and never everyone seems to be snug with that.

Jackrong, the identical pseudonymous developer behind that challenge, heard the suggestions. His reply is Gemopus—a brand new household of Claude Opus-style fine-tunes constructed solely on Google’s open-source Gemma 4. All-American DNA, similar thought: frontier-level reasoning, operating regionally on {hardware} you already personal.

The household is available in two flavors. Gemopus-4-26B-A4B is the heavier possibility—a Combination of Specialists mannequin that has 26 billion complete parameters however solely prompts round 4 billion throughout inference, which implies it punches properly above its weight on constrained {hardware}.

Parameters are what decide an AI’s capability to be taught, purpose, and retailer info. Having 26 billion complete parameters provides the mannequin an enormous breadth of information. However by solely “waking up” the 4 billion parameters related to your particular immediate, it delivers the high-quality outcomes of an enormous AI whereas remaining light-weight sufficient to run easily on on a regular basis {hardware}.

The opposite is Gemopus-4-E4B, a 4-billion parameter edge mannequin engineered to run comfortably on a contemporary iPhone or a thin-and-light MacBook—no GPU required.

The bottom mannequin alternative issues right here. Google’s Gemma 4, launched on April 2, is constructed instantly from the identical analysis and expertise as Gemini 3—the corporate stated so explicitly at launch. Meaning Gemopus carries one thing no Qwen-based fine-tune can declare: The DNA of Google’s personal state-of-the-art closed mannequin underneath the hood, wrapped in Anthropic’s considering fashion on prime. One of the best of each worlds, roughly.

What makes Gemopus totally different from the wave of different Gemma fine-tunes flooding Hugging Face proper now could be the philosophy behind it. Jackrong intentionally selected to not power Claude’s chain-of-thought reasoning traces into Gemma’s weights—a shortcut most competing releases take.

His argument, backed by latest analysis, is that stuffing a scholar mannequin with a trainer’s surface-level reasoning textual content would not truly switch actual reasoning capability. It teaches imitation, not logic. “There isn’t a want for extreme creativeness or superstitious replication of the Claude-style chain of thought,” the mannequin card reads. As an alternative, he targeted on reply high quality, structural readability, and conversational naturalness—fixing Gemma’s stiff Wikipedia tone and its tendency to lecture you about belongings you did not ask.

AI infrastructure engineer Kyle Hessling ran impartial benchmarks and revealed the outcomes instantly on the mannequin card. His verdict on the 26B variant was fairly favorable. “Glad to have benched this one fairly exhausting and it is a superb finetune of an already distinctive mannequin,” he wrote on X. “It rocks at one-shot requests over lengthy contexts, and runs extremely quick due to the MOE (combination of consultants) structure.”

Gemopus-4-26B-A4B from Jackrong is LIVE!

Glad to have benched this one fairly exhausting (see my benches within the mannequin card) and it is a superb finetune of an already distinctive mannequin! My pal Jackrong is all the time cooking the best!

It rocks at one-shot requests over lengthy…

— Kyle Hessling (@KyleHessling1) April 10, 2026

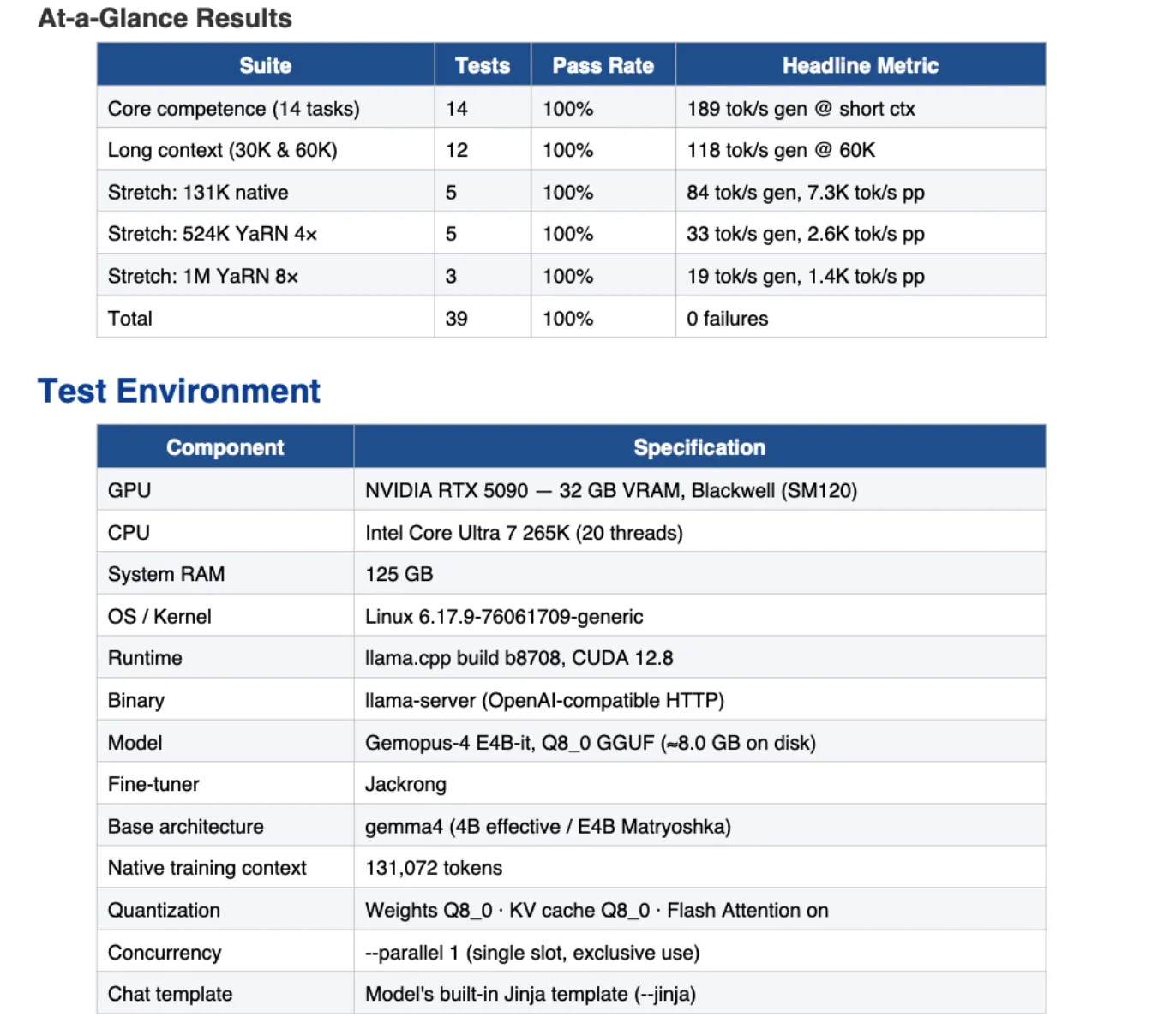

The smaller E4B variant handed all 14 core competence exams—instruction following, coding, math, multi-step reasoning, translation, security, caching—and cleared all 12 long-context exams at 30K and 60K tokens. On needle-in-haystack retrieval, it handed 13 out of 13 probes together with a stretch check at a million tokens with YaRN 8× RoPE scaling.

The 26B extends natively to 131K context and all the way in which out to 524K with YaRN, which Hessling additionally stress-tested: “It additionally crushed my easy needle-in-the-haystack exams all the way in which out to an prolonged context of 524k!”

On edge {hardware}, the E4B is genuinely quick. Jackrong experiences 45–60 tokens per second on iPhone 17 Professional Max, and 90–120 tokens per second on MacBook Air M3/M4 through MLX. The 26B MoE structure means it offloads gracefully on unified reminiscence techniques or GPUs with underneath 10GB of VRAM. Hessling referred to as it his every day driver suggestion for VRAM-starved setups.

Each fashions can be found in GGUF format, which implies you possibly can drop them straight into LM Studio or llama.cpp with out configuration. The total coaching code and a step-by-step fine-tuning information are on Jackrong’s GitHub—similar pipeline he used for Qwopus, similar Unsloth and LoRA setup, reproducible on Colab.

Gemopus isn’t with out its tough edges. Instrument calling stays damaged throughout all the Gemma 4 sequence in llama.cpp and LM Studio—name failures, format mismatches, loops—so in case your workflow is determined by brokers utilizing exterior instruments, this isn’t your mannequin but. Jackrong himself calls it “an engineering exploration reference relatively than a completely production-ready resolution,” and recommends his personal Qwopus 3.5 sequence for anybody who wants one thing extra steady for actual workloads.

And since Jackrong intentionally prevented aggressive Claude-style chain-of-thought distillation, do not count on it to really feel as deeply Opus-brained as Qwopus—that was a aware tradeoff for stability, not an oversight.

Yeah the philosophy on this one was stability first, it’s my understanding that the Gemma fashions are inclined to turn out to be unstable in the event you power a bunch of Claude considering traces into them, you possibly can see this when testing many different Opus gemma high quality tunes on hugging face.

Jackrong tried a…

— Kyle Hessling (@KyleHessling1) April 10, 2026

For individuals who wish to go deeper into Gemma fine-tuning for reasoning particularly, there’s additionally a separate group challenge price watching: Ornstein by pseudonmyous developer DJLougen, which takes the identical 26B Gemma 4 base and focuses particularly on enhancing its reasoning chains with out counting on the logic or fashion of any particular third celebration mannequin.

One sincere caveat: Gemma’s coaching dynamics are messier than Qwen’s for fine-tuners—wider loss fluctuations, extra hyperparameter sensitivity. Jackrong says so himself. When you want a extra battle-tested native mannequin for manufacturing workflows, his Qwopus 3.5 sequence stays extra robustly validated. However if you’d like an American mannequin with Opus-style polish, Gemopus is at the moment your greatest accessible possibility. A denser 31B Gemopus variant can be within the pipeline, with Hessling teasing it as “a banger for certain.”

If you wish to attempt operating native fashions by yourself {hardware}, examine our information on methods to get began with native AI.

Day by day Debrief Publication

Begin day by day with the highest information tales proper now, plus unique options, a podcast, movies and extra.